Getting AWS provisioned with everything you need to host a website using Ansible 2.9.

Dallas, a seasoned professional with a diverse background, transitions seamlessly between roles as a systems admin turned developer, technical writer, and curriculum developer at Red Hat. With a knack for unraveling complex concepts, he crafts engaging materials primarily in DocBook, guiding enthusiasts through the intricacies of Red Hat's certification courses. In his earlier days, Dallas's passion for Anime led him to contribute to Anime News Network, channeling his creativity and expertise into captivating content. His contributions extended beyond writing as he interviewed prominent figures in the Anime industry, offering insights into their creative processes and visions. Beyond his professional pursuits, he's a devoted husband and father, cherishing moments with his loved ones. Dallas's journey in the tech industry spans various roles, from a security developer at NTT Security to an operations architect overseeing Linux servers for commercial transcoding. His tenure at esteemed institutions like Goldman Sachs and Lockheed Martin has honed his skills as a systems engineer, instilling in him a deep-rooted understanding of complex systems. An avid FPV pilot, Dallas finds exhilaration in soaring through the skies with his drones, often contemplating the lessons learned from his aerial adventures. His diverse experiences, including serving as a naval submariner aboard the USS Alexandria and pursuing higher education in England, enrich his perspective and fuel his thirst for knowledge.

Setup a VPC on Amazon AWS using Ansible.

When redhat asked me to write a training course for AWS using Ansible for the Pluralsite.com website, I thought what a great opportunity to get people who are not all sysadmins or DevOps engineers up and running with a quick web site using Ansible.

Let us say that you are learning a Flask, Django, or lesser extent a ruby on rails framework and you want to test your application on a cloud service.

Ansible is an open-source tool written in python to automate a lot of setups that are repetitive and time-consuming. It can be powerful and will orchestrate an entire application environment no matter where it is deployed. One of my favorite things about Ansible is that it is agentless. Meaning that you do not need to install software on the remote server as long as you can ssh into it. AWS is a cloud computing service offered by Amazon. It is robust and used by a ton of big named companies including Netflix. We will be creating a user, a vpc, and two instances of the t2.micros that we will locate using Ansible.

AWS offers a free instance that includes 750 hours of Linux (RHEL8) and windows t2.micro instances each month for one year. This gives us a great opportunity to learn new processes and get a skeleton built for when you decide to launch your app or website live.

In this post you will learn how to:

Create a VPC.

Create an internet gateway.

Create a public subnet.

Create a routing table

Create a security group.

Locate the latest RHEL8 AMI to use.

Create an SSH key for provisioning Amazon EC2 instances.

Launch an EC2 instance.

Save the Image as an AMI

Run a cleanup script that will tear down everything we built.

Set up the EC2 instance.

Once you provision the instance with your software then we will save an AMI.

Lets prepare the workstation we are working from: The Ansible control node manages all other computers in a given network. The control node can run from any Linux machine having Python2 or Python3 installed. It will normally reside outside the AWS network. The control node requires the installation of several packages:

Use the following command to install pip (Python package manager):

[spohnz@lab ~]$ sudo yum install python3-pip

Use pip to install Ansible 2.9 and the AWS module dependencies.

[spohnz@lab ~]$ sudo pip3 install ansible boto boto3

- Sign in to the AWS Management Console and open the IAM console at AWS

- In the navigation pane, choose Users and then choose Add user.

- In the Username field type testuser

- Choose Programmatic access to enable access and secret keys.

- Click Next: Permissions.

- Add user to a new group:

- In the group name field type testgroup

- Choose boxes for AmazonEC2FullAccess and AmazonVPCFullAccess and click create group.

- Click Next: Tags

- Assign tags to user (this is optional). Tags enable you to add customizable key-value pairs to resources.

- Click Next: Review

- Click Create User

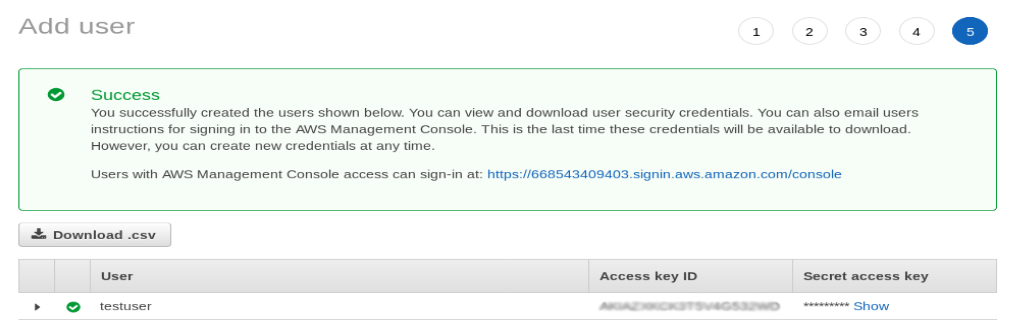

Download the .csv file. This is the only opportunity to save this file. The secret key will later be stored in a variables file called info.yml. If access to the secret key is not available, delete the key and and create another one.

Figure 1. Created User

Figure 1. Created User

Lets create our variable file. 1. Create a working directory that we will build in. 2. Then create a directory inside this directory called vars. 3. Create a file inside the vars directory called info.yml. This file will store the AWS keys, the region, and other variables used in the playbooks.

aws_id: YOUR_AWS_ACCESS_KEY_ID

aws_key: YOUR_AWS_SECRET_KEY

aws_region: us-east-2

ssh_keyname: demo_key

Choose the closest region to your location. You can find them within the dropdown at the upper right corner of the AWS web console. There are many reasons to store values as variables. If you want a more secure way of storing your secret keys use ansible-vault. Ansible Vault is a feature of Ansible that allows you to store your sensitive data such as passwords or keys in encrypted files, rather than as plaintext in playbooks or roles. The next step is to create a playbook to connect to the localhost.

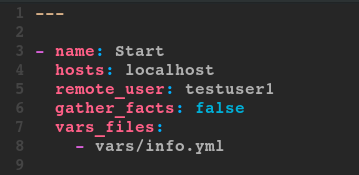

Figure 2. Setup

Figure 2. Setup

This code will be the standard heading of our playbooks. What we will be focusing on mainly are the cloud modules themselves.

The host will be localhost since your are working from the control node. If you are setting up instances in a private network, then you can install Ansible on a jump server in the AWS public VPC. Ansible ensures your cloud deployments work seamlessly across public, private or hybrid cloud as if you were building a single system.

The remote_user is the testuser we created and we do not need to gather facts. vars_files tells Ansible where to find our variable file.

Ansible cloud support modules make it easy to provision networks, instances and complete application infrastructures. From here we will explore the cloud modules that we will be using.

Here is the complete list of all cloud related modules. Cloud Modules

The ec2_vpc_net – Configure AWS virtual private clouds

There have been some name changes in the Ansible modules so refer to the cloud module documentation at https://docs.ansible.com/ansible/latest/modules/list_of_cloud_modules.XHTML

The ec2_vpc_net module will create, modify, and terminate AWS virtual private clouds. There are a number of parameters required in order for this module to run.

cidr_block = This is the primary CIDR of the VPC. When used in conjunction with the name parameter to ensure idempotency.

name = The name to give your VPC. This is used in combination with cidr_block to determine if a VPC already exists.

Take a look at all the parameters offered in the ec2_vpc_net module: https://docs.ansible.com/ansible/latest/modules/ec2_vpc_net_module.html#ec2-vpc-net-module

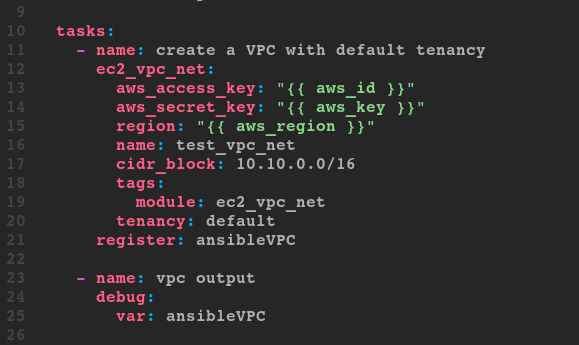

Figure 3. ec2_vpc_net

Figure 3. ec2_vpc_net

- The variable file info.yml holds variable information.

- Variables are recalled using curly brackets {{ variable }}.

- The name and the cidr_block are required parameters and must be included within this module.

- Tags or multiple tags are in the form of a value pair.

- Tenancy is set to default.

- In an enterprise environment, you may need a dedicated tenancy.

- Dynamic values that are returned from the registered variables are used in various different plays.

- The debug parameter prints the return values from the register parameter.

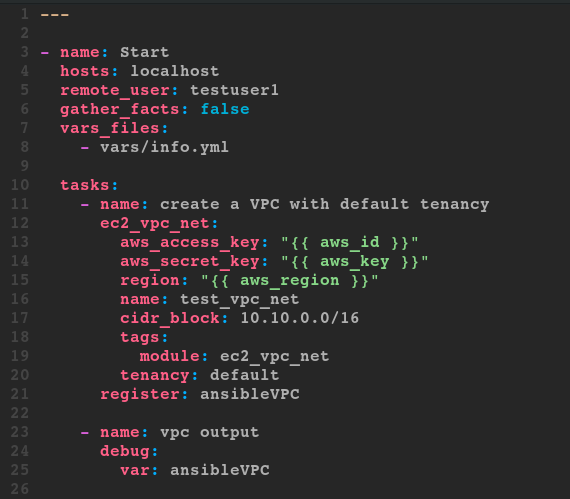

So far the playbook looks like this:

Figure 4. So-Far

Figure 4. So-Far

Save it as aws_playbook.yml and run a syntax check.

[spohnz@lab ~]$ ansible-playbook --syntax-check

After the syntax is verified, run the play.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

PLAY [Start] ********************************

TASK [create a VPC with default tenancy] ****

ok: [localhost]

TASK [vpc output] ***************************

ok: [localhost] => {

""ansibleVPC"": {

""changed"": false,

""failed"": false,

""vpc"": {

...output omitted...

The play outputs JSON content that is used later. Go to the AWS web console to confirm the creation of test_vpc_net: 1. In the AWS web console click on the Services drop down menu in the upper left corner then VPC under Networking and Content Delivery. 2. Look at the VPCs resource to find one there. Click on the box.

Notice the VPC called test_vpc_net. The parameters match those configured in the playbook. I am the image alt text.

Navigate to the tags tab at the bottom of the page to verify that our tags are present.

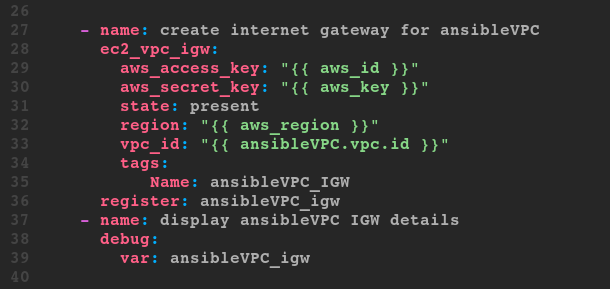

The ec2_vpc_igw – Manage an AWS VPC Internet gateway

To attach an internet gateway to the newly created VPC, take a look at some of the parameters and visit the documentation page for more. The vpc_id parameter is required to run this playbook. Since this information is unknown until after the VPC is created we can acquire the data from the JSON output returned from the debug module. Following the structure to the id we get ansibleVPC.vpc.id which we will use in this play to fill in the vpc_id required field.

The state parameter is used since it creates or deletes the IGW. The vpc_id is a saved variable registered in the debug print statement from the prior play. Name the tag ansibleVPC_IGW Register the debug module as ansibleVPC_igw The gateway ID is required later to create a route table. This ID is found in the debug printout registered at the end of the play.

Figure 5. Image caption

Figure 5. Image caption

Run the play to create the IGW.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

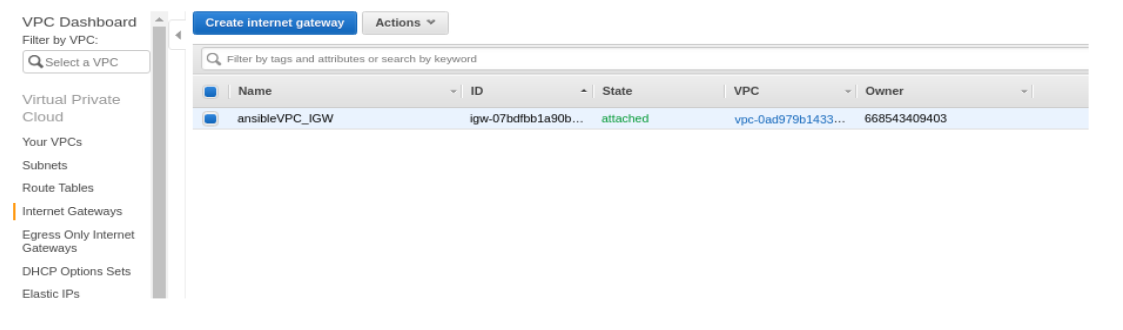

Navigate to the AWS console, click on Internet Gateways in the VPC dashboard to verify the creation of the internet gateway.

Figure 6. Image caption

Figure 6. Image caption

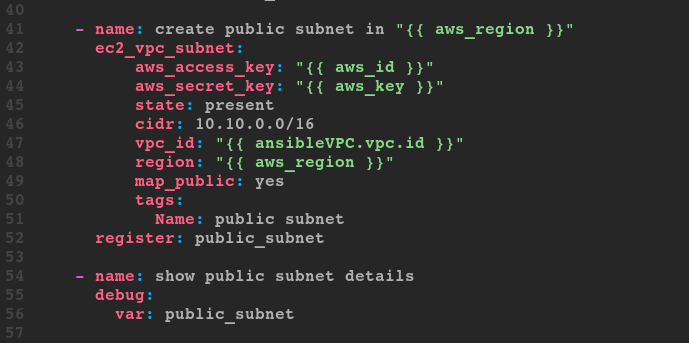

The ec2_vpc_subnet - Manage subnets in AWS virtual private clouds

See the documentation on ec2_vpc_subnet to review all the parameters of this module. https://docs.ansible.com/ansible/latest/modules/ec2_vpc_subnet_module.html#ec2-vpc-subnet-module

The required parameter for this module is vpc_id.

The vpc_id parameter is required to create the ec2_vpc_subnet subnet in AWS.

This parameter is available in memory from the first plays debug print statement: ""{{ ansibleVPC.vpc.id }}""

Set the state to present.

Declare the CIDR block.

- There is one tag named public subnet.

The map_public parameter assigns it a public IP address by default.

Check the syntax of the playbook, then run it.

Figure 7. Create public subnets

Figure 7. Create public subnets

Run the play to create the subnet.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

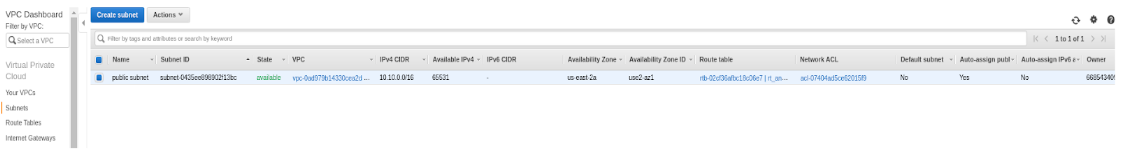

Navigate to the AWS console, click on Subnets in the VPC dashboard to verify the creation of the subnet.

Figure 8. Image caption

Figure 8. Image caption

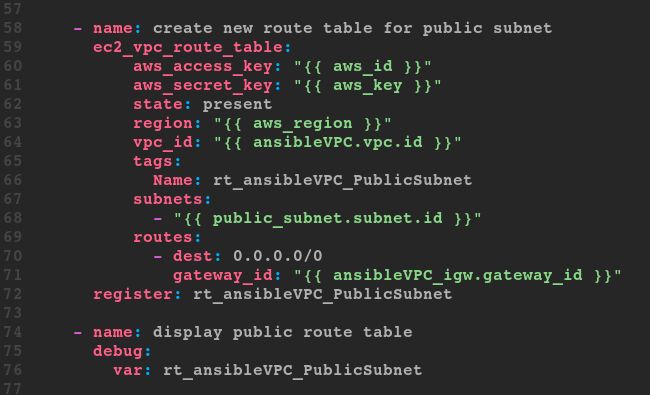

The ec2_vpc_route_table - Manage routing tables

There are many parameters for the ec2_vpc_route_table. Read the documentation at https://docs.ansible.com/ansible/latest/modules/ec2_vpc_route_table_module.html#ec2-vpc-route-table-module

ec2_vpc_route_table module controls where network traffic is heading. It creates a route table for the specified VPC. After the route table is created, add routes and associate the table with the subnet.

Creating a route for the public subnet to the internet gateway will take variables from some of the previous plays.

The vpc_id is a required field.

Attach the subnet from data found in the returned variable ‘public_subnet’ and data found in the returned variable ‘ansibleVPC_igw’.

Tag them for better identification. Tags are used to uniquely identify route tables within a VPC when the route_table_id is not supplied.

Routes are specified as dicts containing the keys 'dest' and one other required parameter. If 'gateway_id’ is specified, you can refer to the VPC’s IGW by using the value 'igw'.

Routes are required for present states.

Figure 9. ec2_vpc_route_table

Figure 9. ec2_vpc_route_table

- Check the syntax of the playbook, then run it.

Run the play to create the new routing table.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

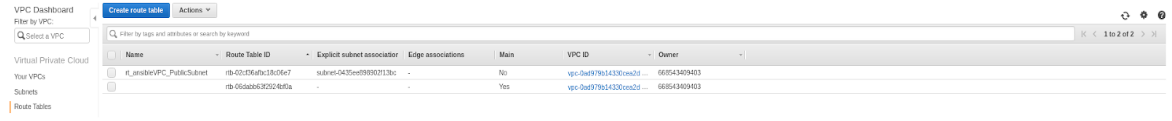

Navigate to the AWS console, click on Route Tables in the VPC dashboard to verify the creation of the route table.

Figure 10. Route Table Success

Figure 10. Route Table Success

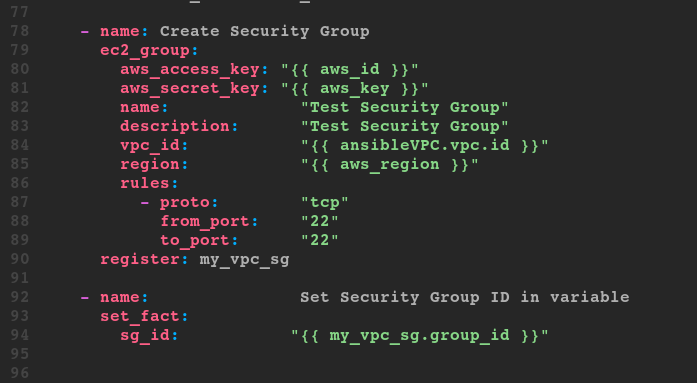

The ec2_group - maintain an ec2 VPC security group

There are many parameters for ec2_group. You can find them all at https://docs.ansible.com/ansible/latest/modules/ec2_group_module.html#ec2-group-module

Although vpc_id is not a required parameter it will be used to associate the group with the VPC.

In order to launch an instance in AWS you need to sign it to a particular security group.

Give your security group a descriptive name. Use unique names within the same VPN.

You also need to create a security group that is in the same VPC as the resources you want to protect.

AWS security group starts with all deny rules. If you want to open a port you need to specify it.

Figure 11. ec2_group

Figure 11. ec2_group

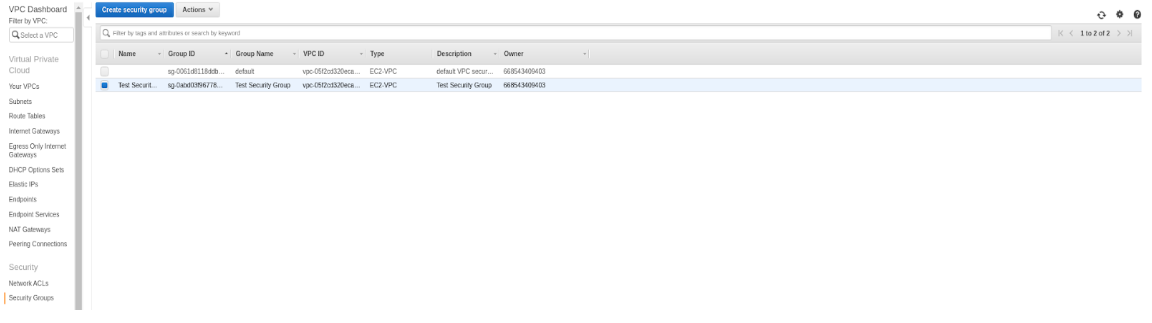

Run the playbook and verify the creation of the security group.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

Figure 12. Image caption

Figure 12. Image caption

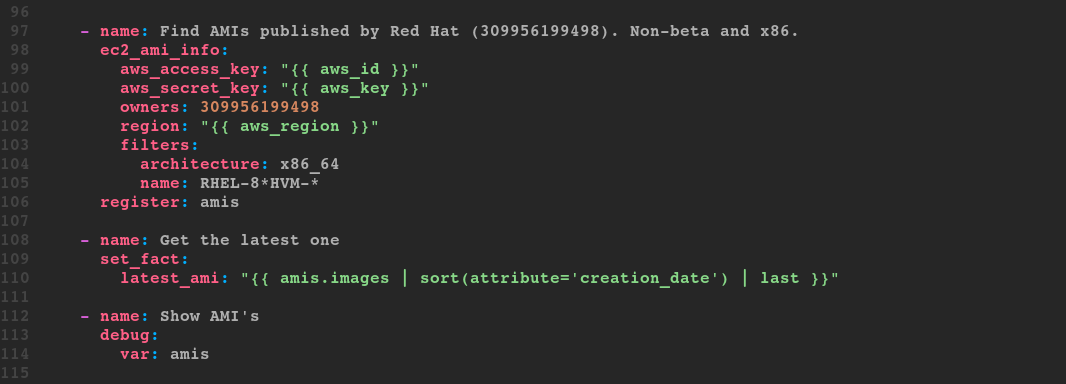

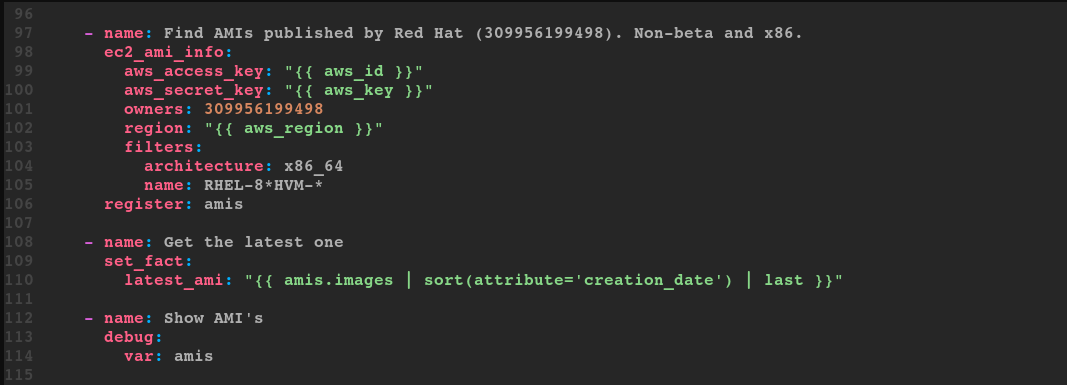

The ec2_ami_info – Gather information about ec2 AMIs

Knowing the owner ID, use Ansible to query AWS and retrieve all the RHEL images available for that region. Use the latest image by filtering the creation date.

Review the debug printout for the list of RHEL images.

To target just the image_id for the newest image, create a variable called latest_ami to sort the data and retrieve only the newest image.

Figure 13. ec2_ami_info

Figure 13. ec2_ami_info

Run the play and verify he group creation.

[spohnz@lab ~]$ ansible-playbook aws_playbook.yml

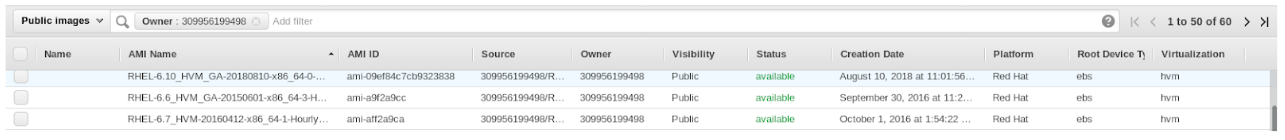

Navigate to the AWS console, click on EC2 in the Services menu. Click on AMIs in the left menu bar. To limit the output filter by owner and change “Owned by me” to “Public images”.

Figure 14. ec2_ami_info

Figure 14. ec2_ami_info

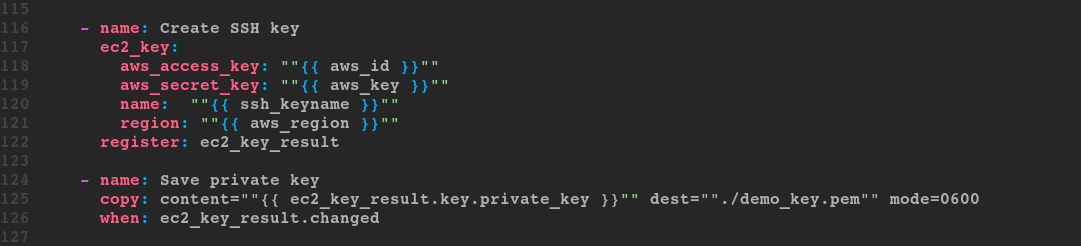

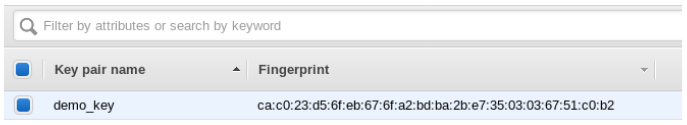

The ec2_key - create or delete an ec2 key pair

When you launch an EC2 instance, you must use an SSH Key that is located in the same region hosting the instance.

The ssh_keyname will be the demo_key variable we have in our var/info.yml file.

Create the key by running the ec2_key module. The name is required.

Use the copy module to save the private key (.pem file) to your local directory as mode 600.

Figure 15. Image caption

Figure 15. Image caption

Navigate to the AWS console, click on Key Pairs in the Network & Security menu to verify the creation of the demo_key keypair.

Figure 16. Image caption

Figure 16. Image caption

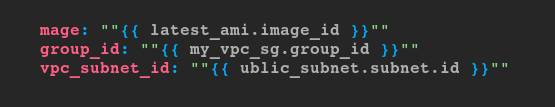

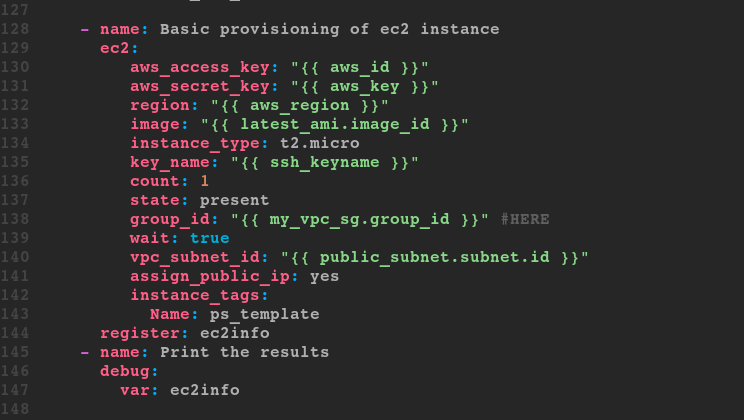

The ec2 - Create, Terminate, Start or Stop an Instance in EC2

The EC2 module allows you to create and destroy AWS instances.

Once an instance has been created, it is then available to the Provisioning modules.

Associate the AMI located from a previous play.

Declare an instance type of t2.micro

Associate the SSH key to the ec2 instance being created.

Attach the security group.

Attach the subnet.

Assign the new instance of a public IP address.

There are many parameters for ec2. Several are a required field. https://docs.ansible.com/ansible/latest/modules/ec2_module.html

Ability to launch multiple groups with multiple instances.

Quickly stand up AMIs for separate designations.

Tag instances with the ec2_tag module for later grouping and management

From the previous plays, we have data that we can use to create the instance.

Figure 17. Image caption

Figure 17. Image caption

Assign public IP to them.

Use count to create two instances.

Filter the debug variable so it displays the public IP as opposed to the complete JSON return.

- Go to the AWS web console to confirm the creation of test_vpc_net

- In the AWS web console click on the Services drop-down menu in the upper left corner then EC2 under Compute.

- Within the EC2 Dashboard, navigate to Running Instances.

- Check the box on the left for the running instance.

- Notice the public IP address.

- Click the Actions button at the top.

- Click Connect.

- Copy the example and log into the instance for software provisioning (could also use the public ip address).

Figure 18. ec2

Figure 18. ec2

Dynamic Inventory - ec2.py and the ec2.ini

A limited number of resources are easy to manage. For large management situations of 10,000 or 100,000 instances. Instances that have frequently changed IP’s or autoscaling instances then you are going to need more flexibility. This is where the EC2.py and the EC2.ini files are used. They leverage AWScli in order to achieve this. The EC2.py script is written using the Boto EC2 library and will query AWS for your running Amazon EC2 instances. The EC2.ini file is the config file for EC2.py and can be used to limit the scope of Ansible’s reach.

You can specify: - Regions - Instance tags - Roles

Use Wget/Curl/Git to pull those files down into the working directory.

wget https://raw.githubusercontent.com/ansible/ansible/devel/contrib/inventory/ec2.py

wget https://raw.githubusercontent.com/ansible/ansible/devel/contrib/inventory/ec2.ini

You will need to set some environment variables for the inventory management script. In order to tell Ansible to use the ec2.py in replace of the /etc/ansible/hosts file, run;

[spohnz@lab ~]$ export ANSIBLE_HOSTS=/your_working_drive/ec2.py

Make file executable..

[spohnz@lab ~]$$ chmod +x ec2.py

If the ec2.ini file is in a different location than the ec2.py then the ec2.py will need to be edited to reflect where the ec2.ini is located.

In order to tell ec2.py where the ec2.ini config file is located, run:

[spohnz@lab ~]$ export ANSIBLE_HOSTS=/your_working_drive/ec2.ini

The ec2.ini file has the default AWS configurations which are read by ec2.py file. To save time, comment out the region's line in the ec2.ini since we have only launched in one region.

Credentials for boto are kept in ~/.aws/credentials file. If the file is not there then create it.

Test the script by running:

[spohnz@lab ~]$ ./ec2.py --list

There are several ways to authenticate with AWS. The simplest is to export them to the environment.

[spohnz@lab ~]$ export AWS_ACCESS_KEY_ID=your_aws_access_key

[spohnz@lab ~]$ export AWS_SECRET_ACCESS_KEY=your_aws_secret_access_key

Test the script by running the list again.

[spohnz@lab ~]$ ./ec2.py --list

If nothing is running in your region then you will return a blank JSON format.

{

""_meta"": {

""hostvars"": {}

}

}

If instances are up and running, you will get the output with all the instance details.

...

""tag_demo_template"": [

""18.222.225.148""

],

""tag_demo_template_db1"": [

""52.14.113.2""

],

""tag_demo_template_db2"": [

""18.217.139.231""

],

...

Inventory plugins allow users to point at data sources to compile the inventory of hosts that Ansible uses in playbooks. Inventory plugins take advantage of the most recent updates to the Ansible core code. For this reason, Red Hat recommends plugins over scripts for dynamic inventory.

Specify the inventory plugin on the CLI with -i. The inventory path can default via inventory in the ansible.cfg file in the [defaults] section or the ANSIBLE_INVENTORY environment variable.

[spohnz@lab ~]$ ansible-inventory -i aws_ec2.yml --graph

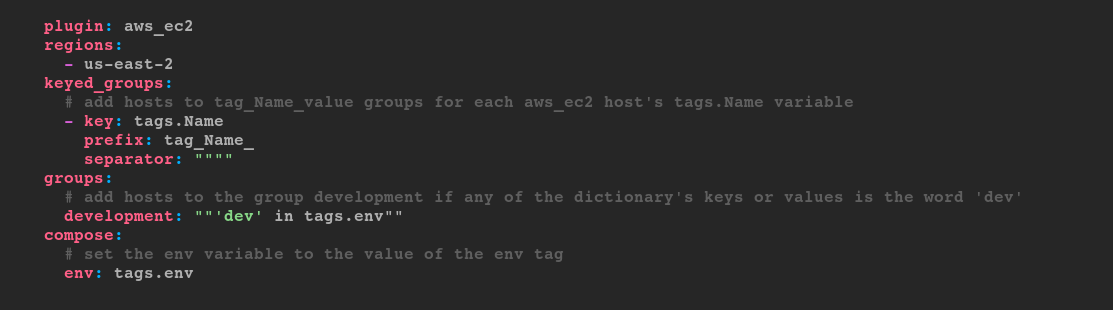

The aws_ec2 inventory plugin gets inventory hosts from Amazon Web Services EC2. It uses a YAML configuration file that ends with aws_ec2.yml or aws_ec2.yaml.

Commonly used parameters:

| Parameter | Description |

| compose | Create vars from jinja2 expressions. |

| filters | A dictionary of filter value pairs. Available filters are listed here http://docs.aws.amazon.com/cli/latest/reference/ec2/describe-instances.html#options. |

| groups | Add hosts to a group based on Jinja2 conditionals. |

| keyed_groups | Add hosts to a group based on the values of a variable. |

| plugin | Required. Token that ensures this is a source file for the plugin. |

| regions | A list of regions in which to describe EC2 instances. If empty the default will include all regions, except possibly restricted ones like us-gov-west-1 and cn-north-1. The plugin takes longer to run when empty. |

Figure 19. Image caption

Figure 19. Image caption

The ec2_ami_info

Basic procedure: find the AMI you want to customize with ec2_ami_info, launch an instance running it with ec2, modify that instance by running playbooks that apply Ansible roles and tasks on the instance, run ec2_ami on the instance to save the new AMI (taking other steps as needed so that you can find it again), and end the instance with ec2.

Figure 20. ec2_ami_info

Figure 20. ec2_ami_info

Once an AMI is found it can be launched and customized using Ansible. The ec2 module can launch the found instance and any other Ansible module can configure the instance once it is up. After launching and configuring an instance, the new instance can be saved as an AMI for reuse. The ec2_ami module registers or deregisters AMIs. The instance ID is needed to create a new AMI from that instance.

Removing Cloud resources

Basic procedure: use ec2_instance_info to register a list of running instances, use ec2 to destroy those instances, use ec2_vpc_net_info to find the VPC’s ID, delete the security groups with ec2_group, delete the subnets with ec2_vpc_subnet, delete the gateway with ec2_vpc_igw, use ec2_vpc_route_table_info to get route table information so it can be deleted with ec2_vpc_route_table, use ec2_key to delete the keypair, and finally use ec2_vpc_net to remove the VPC.

Cleaning up is a surefire way to keep from spending extra on cloud resources. Cloud resources need to be cleaned up frequently to avoid incurring charges for unused resources. Maintaining cloud resources can be automated with Ansible to help reduce costs.

The EC2 resources are typically removed in the reverse order that they are created. Specifically, the VPC cannot be removed until after the resources that use the VPC are removed first. The following objects are dependencies that need to be removed before a VPC can be removed :

Subnets

Security Groups

Network ACLs -Internet Gateways

Egress Only Internet Gateways

Route Tables

Network Interfaces

Peering Connections

Endpoints

All the Ansible modules previously used to create the cloud resources are the same ones to use for removing the cloud resources. Most of the tasks only need the state to be changed from present to absent. To automate finding resources to clean up, use the modules appended with _info.

Modules for finding resources:

ec2_instance_info

ec2_vpc_net_info

ec2_vpc_route_info

Modules for removing resources:

ec2

ec2_group

ec2_vpc_subnet

ec2_vpc_igw

ec2_vpc_route_table

ec2_vpc_net

ec2_key

---

- name: Remove everything

hosts: all

gather_facts: false

vars:

aws_region: us-east-2

ssh_key_name: demo_key

vpc_name: test_vpc_net

vpc_cidr: 10.10.0.0/16

tasks:

- name: Find VPC IDs

ec2_vpc_net_info:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

filters:

"tag:Name": "{{ vpc_name }}"

cidr-block: "{{ vpc_cidr }}"

register: vpc

- block:

- name: VPC ID

set_fact:

vpc_id: "{{ vpc.vpcs[0].vpc_id }}"

- name: Find instances to remove

ec2_instance_info:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

filters:

vpc-id: "{{ vpc_id }}"

register: instances

- name: Terminate instances

ec2:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

instance_ids: "{{ item.instance_id }}"

state: absent

wait: true

loop: "{{ instances.instances }}"

- name: Remove Security Group

ec2_group:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

name: "Test Security Group"

state: absent

- name: Remove public subnet in "{{ aws_region }}"

ec2_vpc_subnet:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

vpc_id: "{{ vpc_id }}"

cidr: "{{ vpc_cidr }}"

state: absent

- name: Remove internet gateway for ansibleVPC

ec2_vpc_igw:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

vpc_id: "{{ vpc_id }}"

state: absent

- name: Find route tables for removal

ec2_vpc_route_table_info:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

filters:

# Note the - instead of _

vpc-id: "{{ vpc_id }}"

register: route_tables

- name: Remove route tables

ec2_vpc_route_table:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

route_table_id: "{{ item.id }}"

vpc_id: "{{ vpc_id }}"

lookup: id

state: absent

loop: "{{ route_tables.route_tables }}"

when: item.associations | length == 0 or not item.associations[0].main

- name: Remove SSH key

ec2_key:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

name: "{{ ssh_key_name }}"

state: absent

- name: Remove VPC

ec2_vpc_net:

aws_access_key: "{{ vault_aws_access_key }}"

aws_secret_key: "{{ vault_aws_secret_key }}"

region: "{{ aws_region }}"

name: "{{ vpc_name }}"

cidr_block: "{{ vpc_cidr }}"

state: absent

when: vpc.vpcs | length > 0

view raw

Conclusion

Ansible as you will know is an important tool in my toolset. Once you start building playbooks you will always find new achievements to accomplish with it.

VPC’s for the cloud is no different. Check our all the cloud modules as well as all the modules to start having fun.